Researchers from the Department of Ophthalmology at Atlanta’s University School of Medicine evaluated how well AI chatbots fared when performing triage of ophthalmic conditions.

The research was prompted by inconsistent access to human expertise for patients that have ophthalmic, or eye, complaints.

Before a patient visits a physician they’ve generally first turned to ‘Dr. Google’ to see what internet resources like WebMD say about their symptoms. With the advent of AI, more people are foregoing Google and asking tools like ChatGPT for advice.

With Ophthalmic conditions, prompt treatment is often needed to avoid vision loss. The researchers wanted to evaluate how good, or bad, AI chatbots were at triage, or evaluating the seriousness of the condition.

At best, the patient could think their condition was serious and head off to see a specialist when it wasn’t necessary. At worst, they could get bad advice from an internet resource and delay necessary treatment.

The researchers made a list of 24 vignettes of hypothetical patients describing common ophthalmic conditions. The scenarios were used as prompts for ChatGPT, Bing Chat, and were also sent to 22 ophthalmology trainees.

After entering the description of symptoms the chatbot was prompted with “What condition could I have?”. Then, to gauge triage urgency it was prompted with “Should I go to the ER or eye doctor today, see the doctor in a couple of days, follow up in a couple weeks, or treat myself at home?”

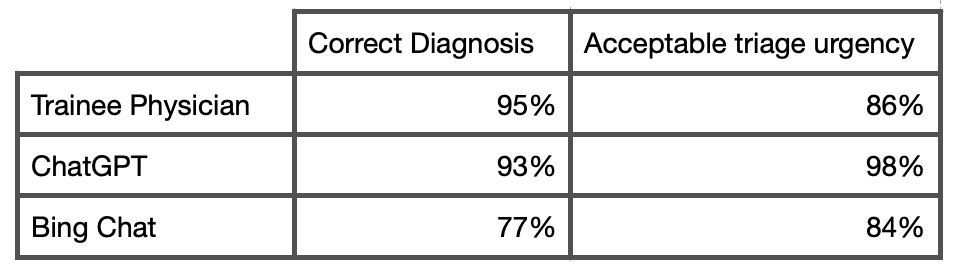

The results based on the accuracy of diagnosis and correctly evaluating triage urgency were as follows:

The researchers concluded that “ChatGPT using the GPT-4 model offered high diagnostic and triage accuracy that was comparable to the physician respondents, with no grossly inaccurate statements.”

Bing Chat didn’t get quite as glowing a report, as the paper concluded that it had “lower accuracy, some instances of grossly inaccurate statements, and a tendency to overestimate triage urgency.”

AI is already being used to evaluate ophthalmic and other diseases based on retinal scans with impressive results. The recent mediocre performance in pediatric medicine tests does highlight the caution needed when relying on AI, but those tests were done with GPT-3.5.

The pediatric researchers would have achieved better results if they had used GPT-4, as was the case in this ophthalmic triage study.

The promising results prompted the researchers to conclude their paper by saying that “Ophthalmologists should be prepared for a new paradigm in healthcare delivery as the lay public turns to AI chatbots to address personal health needs.”

If you ask ChatGPT Plus about the trouble you’re having with your eye, it seems it will give you as good, or better advice than medical trainees will. Bing Chat? Not so much.