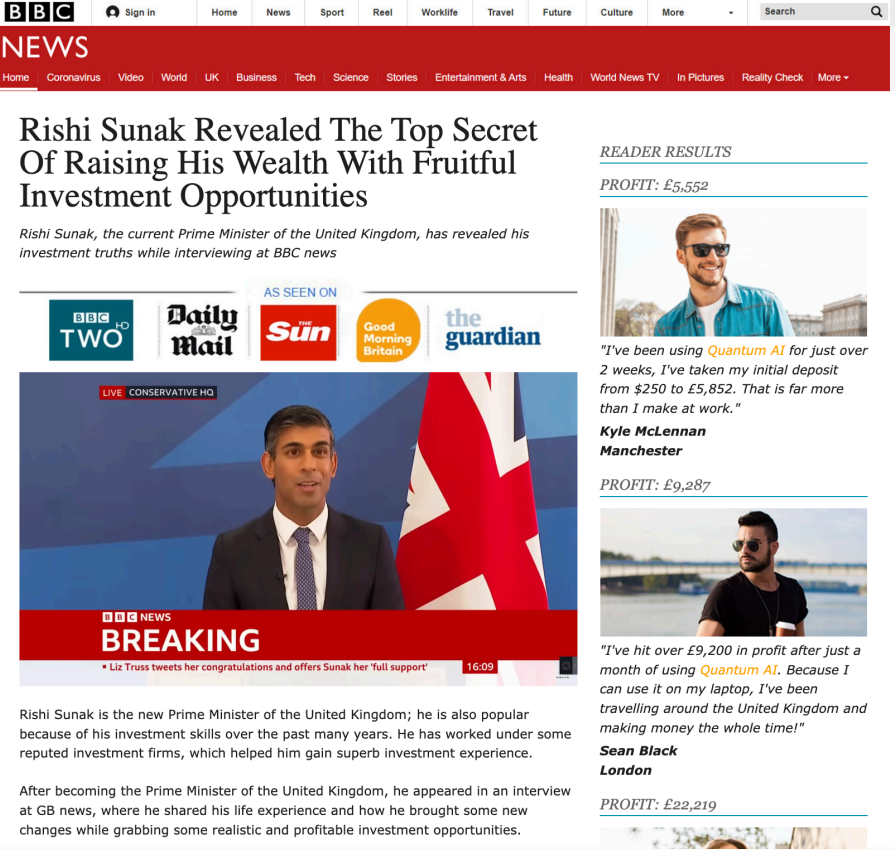

Over 100 deep fake video advertisements featuring UK Prime Minister Rishi Sunak have been promoted on Facebook.

The online communications company Fenimore Harper conducted the research and published their findings in this report.

The deep fake ads reached a possible 400,000 people across 143 adverts, with their origins stemming from countries like the US, Turkey, Malaysia, and the Philippines. Ad expenditures likely exceeded £12,929.

One particularly striking example includes a fabricated clip of BBC newsreader Sarah Campbell falsely reporting a scandal involving Sunak and an app allegedly launched by Elon Musk.

The advert claims Elon Musk has “launched an application capable of autonomously conducting stock and market transactions” and goes on to say, “I personally can vouch for the reliability of this investment platform.”

It led to a spoofed BBC News page promoting a scam investment.

Marcus Beard, the founder of Fenimore Harper, explained, “With the advent of cheap, easy-to-use voice and face cloning, it takes very little knowledge and expertise to use a person’s likeness for malicious purposes.”

He further highlighted the issue of insufficient moderation of paid advertising on platforms like Facebook, noting that “These adverts are against several of Facebook’s advertising policies. However, very few of the ads we encountered appear to have been removed.”

In response, a UK government spokesperson stated, “We are working extensively across government to ensure we are ready to rapidly respond to any threats to our democratic processes through our defending democracy taskforce and dedicated government teams.”

“Our Online Safety Act goes further by putting new requirements on social platforms to swiftly remove illegal misinformation and disinformation – including where it is AI-generated – as soon as they become aware of it.”

The BBC, acknowledging the increasing prevalence of disinformation, urged vigilance. A spokesperson stated, “We launched BBC Verify in 2023 to address the growing threat of disinformation – investing in a highly specialised team with a range of forensic and open source intelligence (OSINT) to investigate, factcheck, verify video, counter disinformation, analyse data and explain complex stories.”

“We build trust with audiences by showing how BBC journalists know the information they are reporting, and offer explainers on how to spot fake and deepfake content. When we become aware of fake BBC content we take swift action.”

Meta, the parent company of Facebook, has also addressed these concerns. A spokesperson from Meta commented, “We remove content that violates our policies whether it was created by AI or a person.”

The increasing sophistication and prevalence of AI-generated deep fakes, especially in the context of political campaigns, raise huge questions about the integrity of information in the era of AI.

AI-manipulated content pressuress elections

As the world braces for a series of high-profile elections in 2024 and 2025, deep fake misinformation in political campaigns is becoming a pervasive global issue.

In Slovakia, AI-manipulated audio falsely portraying political figures surfaced just before the election, raising alarms about the capability of AI to distort political narratives.

Bangladesh’s national election witnessed similar challenges, where reports highlighted pro-government groups’ use of AI-generated news clips to sway public opinion.

Major technology companies, like Google and Meta, have started implementing policies for political advertisements, mandating disclosure of any form of AI or digital alterations. However, these measures often fall short.