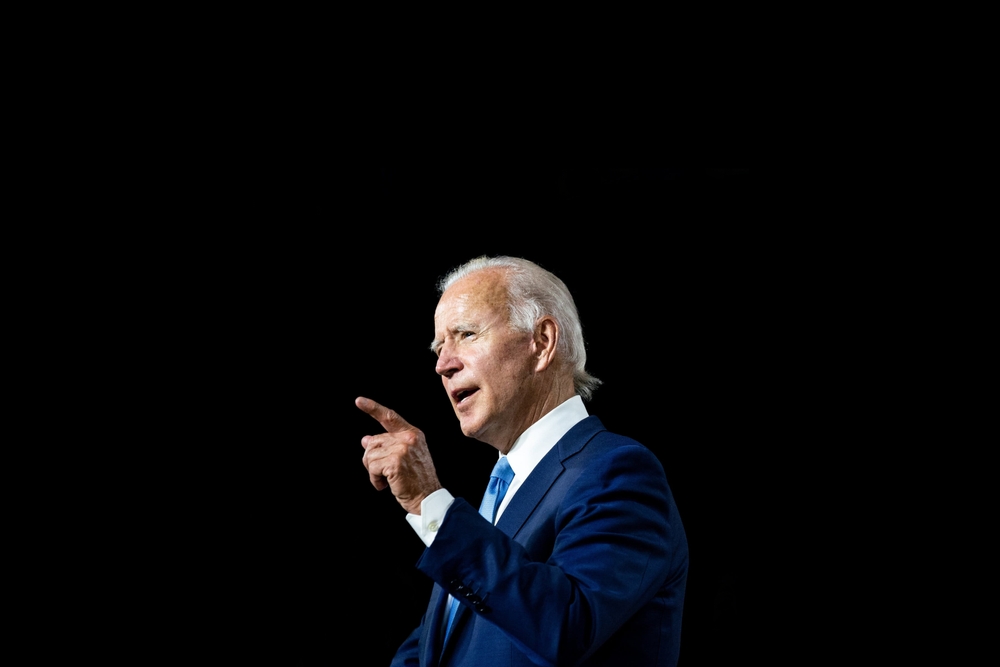

The Biden administration is encouraging cloud service providers like Amazon, Google, and Microsoft to identify foreign users developing AI technologies, particularly those in China.

This new rule could mandate that companies disclose the identities and IP addresses of overseas customers engaged in AI projects.

This aims to restrict access to data centers and servers essential for AI development, representing escalating tensions between the US and Chinese AI industries.

Microsoft’s Vice President of Government Affairs, Fred Humphries, said the company is already gearing up to implement the draft rule, stating, “We welcome know-your-customer and cybersecurity requirements for AI infrastructure and look forward to providing input on the draft rule.”

China’s AI industry was initially slow to adapt to the rising influence of generative AI technologies.

However, that changed mid-to-late 2023, when Chinese tech giants like Baidu and Tencent began releasing their own public products. Baidu has claimed to train a language model on par with OpenAI’s GPT-4.

The Chinese government was extremely cautious to ensure that products mirrored the country’s socialist values and didn’t intentionally or unintentionally criticize the government or its leader, Xi Jinping. Baidu’s ERNIE Bot was found to heavily censor political topics.

In June last year, Xi Jinping spoke of generative AI, “We must be prepared for worst-case and extreme scenarios, and be ready to withstand the major test of high winds, choppy waters, and even dangerous storms.”

Meanwhile, in the US, the Biden-Harris administration has implemented numerous strategies to slow down China’s AI industry. This has primarily included restricting high-end hardware used to train AI models manufactured by companies like Nvidia.

Secretary Gina Raimondo emphasized the Biden administration’s focus on neutralizing national AI security threats, stating, “These models getting in the hands of non-state actors or people that aren’t our allies is very dangerous.”

While US-China relations are extremely frosty on the surface, they’ve reportedly collaborated on AI safety at secretive meetings.

Nevertheless, this new rule, open for public comment until April 29, reflects the US’s broader strategy to maintain a technological edge and safeguard national security interests.