In late May, New York lawyer Steven A Schwartz used ChatGPT to research legal cases on behalf of a client.

Schwartz and a colleague from the same law firm, Peter LoDuca, appeared before a judge on Thursday to explain their actions.

At the hearing, both lawyers confessed to referencing false legal cases generated by ChatGPT.

Schwartz used ChatGPT to locate legal precedents to support a client’s case against the Colombian airline Avianca, to which the AI returned several false cases and one real case with grossly altered details.

Schwartz quoted these in the plaintiff’s brief, but when US District Judge Kevin Castel received it, he quickly noticed the bogus cases and said, “Six of the submitted cases appear to be bogus judicial decisions with bogus quotes and bogus internal citations.”

“I did not comprehend that ChatGPT could fabricate cases,” Schwartz confessed to Castel, admitting that he had been “operating under a misconception…that this website was obtaining these cases from some source I did not have access to.”

Judge Castel confronted them with a particular fabricated legal case that should’ve struck Schwartz as false. The case was first described as a wrongful death case but later transformed into a case about a man who missed a flight and was forced to pay additional expenses.

In other words, even to a layperson, it was probably evident that this case was false, so Schwartz likely didn’t read the AI’s output properly. “Can we agree that’s legal gibberish?” Castel said of the case.

Schwartz apologized profusely, “I would like to sincerely apologize,” and said he felt “embarrassed, humiliated and extremely remorseful.”

LoDuca admitted that he didn’t adequately review the material Schwartz compiled. He said, “It never dawned on me that this was a bogus case” and that the outcome “pains me to no end.”

In terms of whether the duo will be punished, Attorney Ronald Minkoff argued that the pair’s actions “resulted from carelessness, not bad faith” and, thus, should not be penalized.

Minkoff argued, “Mr. Schwartz, who barely does federal research, chose to use this new technology. He thought he was dealing with a standard search engine.”

The final verdict on sanctions is pending.

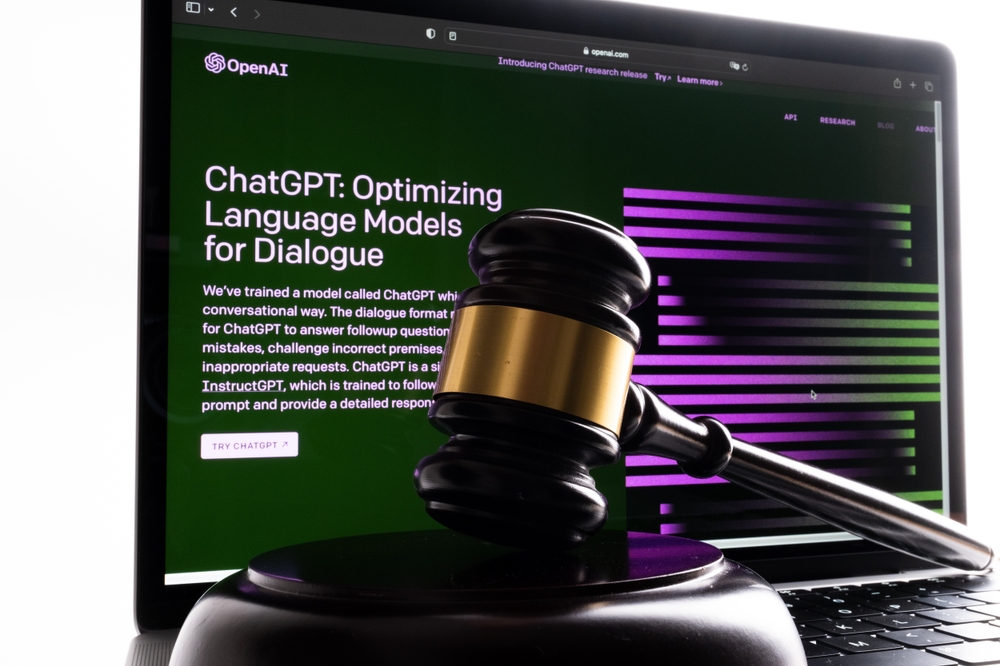

AI ‘hallucinations’ pose both ethical and legal issues

A sequence of legal cases is hitting OpenAI, including three cases of potential libel that arose when ChatGPT’s output contained false and defamatory information.

The outcome here is different. This is an example of misconduct resulting from advice taken from ChatGPT’s output.

Daniel Shin, an adjunct professor and assistant director of research at the Center for Legal and Court Technology at William & Mary Law School, said, “This was the first documented instance of potential professional misconduct by an attorney using generative AI.”

It was only a matter of time before someone made this mistake. Schwartz happened to be the first (that we know of) in what has turned out to be a precedent he probably didn’t expect to set.