On Wednesday, the European Union made significant progress toward implementing the AI Act, the world’s first piece of comprehensive legislation for AI.

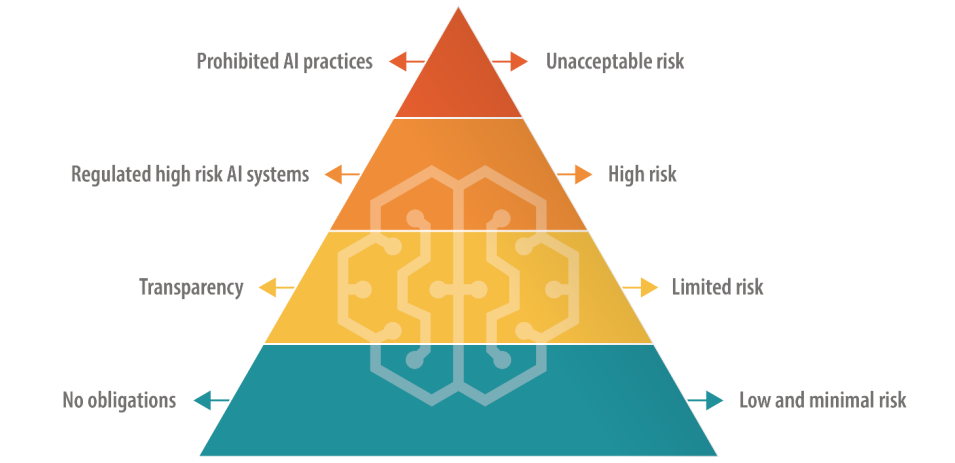

The European Parliament approved a draft law for the Act, which takes a tiered approach to regulating AI by sorting uses into different risk categories.

Tiers include a banned “unacceptable” risk category for AIs that jeopardize people’s safety and human rights and a “high risk” category for AIs that involve critical infrastructure, elections, employment, and other areas of potential harm.

Next are the “limited risk” and the “minimal risk” categories.

Wednesday’s vote confirmed several changes to the proposed law and received 499 votes in favor, 28 against, and 93 absentees.

BREAKING: With overwhelming majority, EU Parliament adopts world’s first comprehensive AI Act.

EU sets global standard: AI must work for democracy, security & planet.

Bans emotion recognition & social media recommender systems classified ‘high risk’ !https://t.co/BBrxznStdR pic.twitter.com/9fLwEiqKTf

— Alice St⭕️llmeyer (@StollmeyerEU) June 14, 2023

What were the amendments?

Firstly, the EU expanded the list of banned “unacceptable” risk AIs, which now include:

- AI for social scoring, where individuals are ranked based on their social behavior or personal characteristics.

- Real-time remote biometric identification systems in public spaces.

- Remote biometric identification systems, except in cases of law enforcement pursuing serious crimes and only with judicial authorization.

- Biometric categorization systems that use sensitive characteristics like gender, race, ethnicity, citizenship status, religion, and political orientation.

- Predictive policing systems that depend on profiling, location, or past criminal behavior.

- Emotion recognition systems deployed in law enforcement, border management, workplaces, and educational institutions.

- Untargeted collection of facial images from the internet or CCTV footage for creating facial recognition databases.

Second-tier “high-risk” AI applications now include systems that could influence elections and recommendation algorithms used by large social media platforms.

As for generative AIs like ChatGPT, developers are required to actively assess and mitigate risks to health, safety, fundamental rights, the environment, democracy, and the rule of law. They’ll also need to register their models in the EU database to operate in the EU market.

Additionally, AI companies must increase safeguards against generating illegal content and provide detailed summaries of copyrighted training data. Other changes to the Act included exemptions for SMEs and open-source research activities.

Failure to comply could result in companies being forced to delete applications and fines up to 7% of their revenue. EU official Dragos Tudorache said, “There are plenty of sharp teeth in there.” Fines dished out for GDPR violations – including a €1.2bn fine for Meta and a €746m fine for Amazon – show the EU can enforce such actions.

The European parliament president, Roberta Metsola, described it as “legislation that will no doubt be setting the global standard for years to come,” adding that AI was entering “a new age of scrutiny.”

After the vote, EU official Brando Benifei said, “All eyes are on us today. While Big Tech companies are sounding the alarm over their own creations, Europe has gone ahead and proposed a concrete response to the risks AI is starting to pose. We want AI’s positive potential for creativity and productivity to be harnessed but we will also fight to protect our position and counter dangers to our democracies and freedoms during the negotiations with Council”.

Following Wednesday’s vote, the Act enters its final stages, including negotiation with the European Parliament, the European Commission, and the Council of the European Union.

The Act is expected to come into force in 2026.